First and foremost:

The EU AI Act has been the mandatory legal basis since 2026 for Artificial intelligence in Europe. It classifies AI applications based on their risk and establishes strict rules for transparency, security, and human oversight. Companies must act now: As of April 2026, the governance requirements for high-risk systems will be fully in effect. Those who ignore compliance gaps in procurement or operations risk fines of up to €35 million or 7% of global revenue. The law marks the end of the “Wild West era” of AI and transforms regulatory diligence into a genuine seal of quality for European companies.

Key Facts about the EU AI Act

- Core objective: Protection of fundamental rights and security through „trustworthy AI“.

- Status 2026: Full application of bans and start of high-risk regulation.

- Roles: Clear duties for providers and deployers alike.

- Procurement: Central control body for the compliance audit of third-party software.

- Mandatory Training: Companies must promote the AI competence of their employees.

1. Definition: What is the EU AI Act?

The definition of Artificial Intelligence is deliberately broad here and aligns with the OECD. A system is considered AI if it operates with a certain degree of autonomy and derives how to generate outputs such as predictions, recommendations, or decisions from the inputs it receives.

As this is a regulation, the law applies directly to any company that places AI systems on the EU market or uses their results here – regardless of whether the company is based in Berlin, New York, or Shanghai. With this, the EU is setting a global standard, similar to what it did previously with the GDPR.

„Innovation without regulation is flying blind, but regulation without innovation is stagnation. The art lies in building guardrails that don't throttle the pace, but instead make the journey safe.“

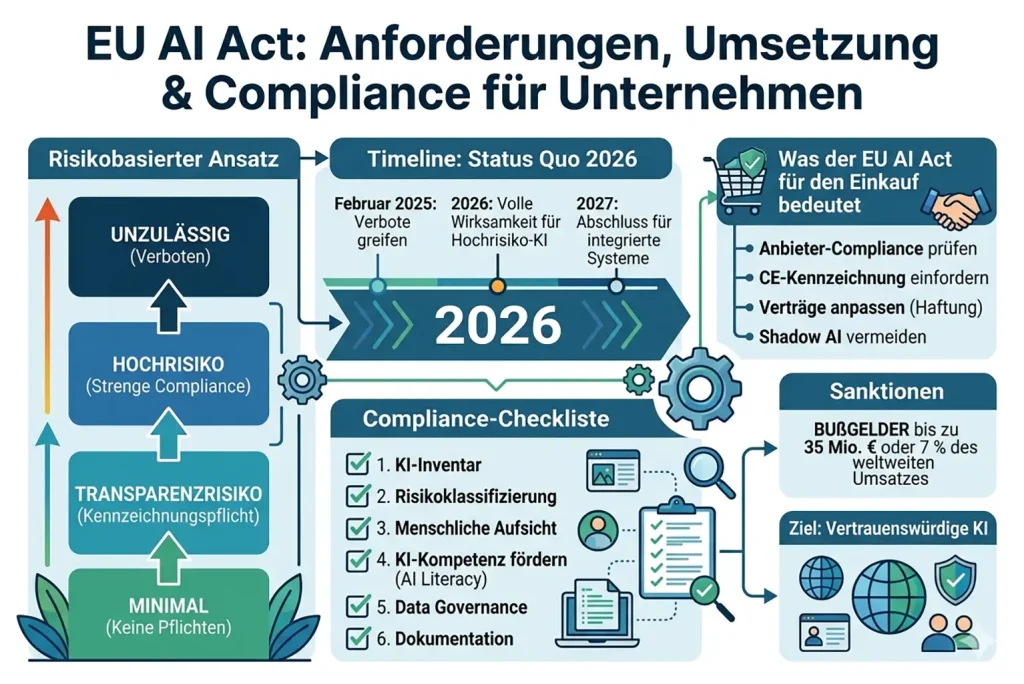

2. The EU AI Act's risk-based approach

The core of the regulation is the classification of AI systems into different risk classes. This approach prevents useful, harmless applications from being hampered by excessive bureaucracy, while dangerous applications are strictly controlled.

- Unacceptable Risk (Prohibited): This category includes applications with the potential to severely violate human dignity. This includes social scoring (the assessment of social behaviour by the state), manipulative techniques to influence free will, or real-time remote biometric identification in public spaces. These systems have been completely banned since 2025.

- High-risk AI: This is where it becomes particularly relevant for companies in 2026. Systems that decide on life chances (e.g., in education, HR tools, or credit checks) are considered high-risk. They are subject to strict regulations regarding data quality, technical documentation, and transparency towards authorities.

- Low risk and transparency requirements: Applications such as chatbots or deepfakes fall under this category. The main requirement is labelling: a user must know they are communicating with a machine, or that an image has been artificially generated.

- Minimal risk: This applies to the overwhelming majority of AI applications, such as simple spam filters or AI in video games. Here, the law does not provide for any additional obligations, other than compliance with general rules of care.

3. Timeline: The path to full effectiveness in 2026

The implementation of the EU AI Act is being carried out in stages to give companies time to adapt their processes. In April 2026, we will be in a crucial phase of transition. The process officially began in August 2024. Just six months later, in February 2025, the prohibitions for AI systems with unacceptable risk came into effect. Companies that have ignored this deadline are already in the sights of supervisory authorities.

From August 2025, the rules for General Purpose AI (GPAI), i.e. models such as large language models (LLMs) that can be used for a wide range of tasks, will also apply. Here, providers must already submit transparency reports and technical documentation. However, the most important milestone for the operational practice of most companies is August 2026. From this point on, almost all high-risk systems must meet the full compliance requirements. This includes not only technical conformity but also the implementation of a functioning risk management system. Those who have not yet started documentation today will hardly be able to meet the deadline in the summer.

Would you like a brief consultation on this?

4. What the EU AI Act means for purchasing

The Shopping has become the central hub for compliance in 2026. Since very few companies develop their AI models from scratch themselves, most AI Solutions purchased as „Software as a Service“ (SaaS). This makes procurement the „gatekeeper“.

In practice, this means that procurement must establish new due diligence procedures. Before new software is subscribed to or licensed, it is essential to clarify whether AI components are included and into which risk class they fall. If it is a high-risk application, procurement must request the corresponding CE marking and technical documentation from the supplier before the contract is signed.

Furthermore, the fundamental contractual terms are changing drastically: Service Level Agreements (SLAs) must now include specific clauses on data quality, bias avoidance, and liability for incorrect algorithmic decisions. Modern procurement in 2026 will no longer focus solely on functionalities but on legally compliant processes. Without the active involvement of procurement, company-wide compliance is simply impossible, as any app purchased carelessly can pose a legal risk to the entire organisation.

5. Deep Dive: Generative AI and Systemic Risks

A closer look reveals the complexity of General Purpose AI (GPAI) – models that are not for a specific purpose, but serve as a basis for many applications (e.g. GPT-4 or similar LLMs). The EU distinguishes between standard GPAI and models with systemic risk. The latter are defined by their computing power (threshold of$10^{25} $ FLOPs).

Such extremely powerful models could have massive societal impacts in the event of malfunctions, for example, in the area of cybersecurity or through the dissemination of massive disinformation. Providers of these models must therefore not only fulfil transparency obligations but also demonstrate their cybersecurity and regularly conduct model evaluations. For companies that integrate these models into their own applications via an interface (API), this means an increased duty of care: one must ensure that the underlying provider has correctly filed its reports with the EU AI Office, as otherwise one is indirectly in breach of one's own duty of care.

6. AI Literacy: The Underrated Duty for Employees

Article 4 of the EU AI Act introduces a requirement that many companies have underestimated until now: promoting AI Literacy. It is not enough to purchase compliant software; the people who operate it must understand how it works and its limitations.

Companies must ensure that their employees are able to critically question AI outputs. This is particularly important to prevent phenomena such as „automation bias“ (blind trust in machine decisions). A trained workforce is the best insurance against misuse, which could lead to liability cases under new regulations. The training must also be tailored to the appropriate level: a buyer needs different knowledge about AI risks than a data specialist in IT. AI training in 2026 will therefore no longer be a voluntary benefit, but an integral part of legal compliance.

7. Practical example: AI in the recruitment process

Let's assume a company introduces a system in 2026 that automatically scans incoming applications and creates a ranking based on the probability of success. According to Annex III of the EU AI Act, AI in the field of employment and human resources management clearly falls under the high-risk category.

In this case, it is not enough to simply use the tool. The company, as the deployer, must demonstrate that the system does not reinforce discriminatory patterns (e.g., against certain countries of origin or genders). Human oversight must be established – meaning a real person must have the right and the technical capability to ignore or correct the AI's ranking. Furthermore, all applicants must be informed that their data will be processed by such a system. If any of these steps are missing, there is not only the risk of a discrimination lawsuit but also direct intervention from supervisory authorities for violating the AI Act.

8. Compliance Checklist for Businesses: Strategic Implementation

Compliance with the EU AI Act is not a one-off project but an ongoing process that deeply embeds itself within the company structure. To systematically meet the legal requirements in 2026, companies should follow a clear roadmap. The following checklist serves as a strategic foundation to cover all critical points from the initial inventory to ongoing monitoring and minimise the management's liability risk:

- Seamless AI inventory: Capture every single tool used within the company that meets AI definitions – including hidden functions in existing software.

- Risk classification according to law: Assign one of the four risk levels to each system and document the decision-making for future audits.

- Role determination (Provider vs. Deployer): Legally clarify whether you are merely a user or have become a provider through modifications to the model.

- Establish a process for high-risk AI that continuously identifies and minimises risks to safety and fundamental rights.

- Transparency check of interfaces: Check whether information obligations towards customers, employees and affected persons are implemented technically and procedurally.

- Implementation of human oversight: Name individuals who are technically trained to validate AI decisions and to shut down the system in emergencies.

- Audit of Data Governance: Ensure that datasets used are representative, error-free, and compliant with copyright.

9. Conclusion: Why EU AI Act Compliance is a Competitive Advantage

In summary, the EU AI Act although it places enormous demands on the organisation, it simultaneously offers a historic opportunity. In 2026, the era of unregulated experimentation will finally be over. Companies that have done their compliance homework will gain a crucial competitive advantage: trust.

„Trust is the hardest currency in the digital age. Those who prove their algorithms are fair and secure will retain customers, while others will fail due to their lack of transparency.“

Customers, partners, and investors increasingly favour companies that can demonstrate their AI solutions are safe, fair, and transparent. Compliance with the EU AI Act protects the brand from reputational damage and prevents existential fines. Those who view the regulation not as a hurdle but as a quality feature position themselves as trustworthy players in the global digital economy. Ultimately, EU AI Act compliance ensures that technological innovation and human values go hand in hand.

10. FAQs: Frequently Asked Questions about the EU AI Act

Do I have to register every AI tool?

No. Only providers of high-risk systems are required to register them in an EU database. However, as an operator (user), you must maintain an internal register.

Does the EU AI Act also apply to software from the United States or China?

Yes, if the system is offered in the EU or the AI's results have an impact in the EU (market place principle).

What happens in the event of violations of the EU AI Act?

Fines of up to €35 million or 7% of global annual revenue may be imposed—whichever amount is higher. Errors in documentation are subject to lower fines.

Are there any exceptions for small businesses (SMEs)?

SMEs have simplified documentation requirements and receive support through so-called "sandboxes" for testing their innovations, but must still adhere to the basic safety rules.